Poster Session A

Juvenile idiopathic arthritis (JIA) and pediatric joint disorders

Session: (0345–0379) Pediatric Rheumatology – Clinical Poster I: JIA

0353: Validation of Claims-based Algorithms for Newly Diagnosed Juvenile Idiopathic Arthritis Using Machine Learning Methods

Sunday, November 12, 2023

9:00 AM - 11:00 AM PT

Location: Poster Hall

- PH

Patricia Hoffman, DO (she/her/hers)

Rutgers Robert Wood Johnson Medical School

New Brunswick, NJ, United StatesDisclosure information not submitted.

Abstract Poster Presenter(s)

Patricia Hoffman1, Lauren Parlett2, Daniel Beachler2, Daniel Reiff3, Sarah McGuire1, Sonia Pothraj1, Lakshmi Moorthy1, Cynthia Salvant4, Dawn Koffman5, Sanika Rege5, Cecilia Huang5, Matthew Iozzio5, Kevin Schott2, Kevin Haynes6, Amy Davidow7, Stephen Crystal8, Tobias Gerhard5, Brian Strom9, Carlos Rose10 and Daniel Horton4, 1Rutgers Robert Wood Johnson Medical School, New Brunswick, NJ, 2Carelon, Wilmington, DE, 3University of Alabama at Birmingham, Birmingham, AL, 4Department of Pediatrics, Rutgers Robert Wood Johnson Medical School, New Brunswick, NJ, 5Rutgers Center for Pharmacoepidemiology and Treatment Science, New Brunswick, NJ, 6Janssen Pharmaceuticals, Raritan, NJ, 7New York University, New York, NY, 8Rutgers University, New Brunswick, NJ, 9Rutgers, State University of New Jersey, New Brunswick, NJ, 10Thomas Jefferson University, Philadelphia, PA

Background/Purpose: Administrative claims databases represent important settings for studying large populations with juvenile idiopathic arthritis (JIA), but prior efforts to validate diagnostic algorithms for JIA using administrative data have been limited to potentially non-generalizable settings (e.g., specific clinics or health care systems). We aimed to develop and validate algorithms for new diagnoses of JIA in a large claims database using rule-based and machine learning-based approaches.

Methods: We performed a cross-sectional validation study using US commercial health plan data (2013-2020). We identified children diagnosed with JIA (ICD-9-CM: 696.0, 714, 720; ICD-10-CM: L40.5, M05, M06, M08, M45) before age 18 following ≥12 months of baseline continuous enrollment without JIA diagnosis or immunosuppression. JIA diagnoses were based on 3 previously validated definitions: 1) rheumatologist's diagnosis plus orders for ≥2 specific laboratory tests; 2) ≥2 outpatient diagnoses 8-52 weeks apart; or 3) 1 inpatient diagnosis. Charts from a random subset of subjects meeting each definition were abstracted and independently adjudicated by clinical experts; discrepancies were resolved by a third expert or, where necessary, consensus. Incident JIA was defined as definite or probable JIA diagnosed in the prior 4 months. Using data from 1 year before through 1 year after first JIA diagnosis, we then created candidate predictor variables from demographics, diagnoses, medications, procedures, and specialty of clinicians diagnosing JIA. After applying a simulation-based balancing method (Synthetic Minority Oversampling Technique, SMOTE), we selected optimal logistic regression regularization hyperparameters using 10-fold cross-validation. Model variables were used to score observations, and sensitivity, specificity, and positive predictive value (PPV) [95% confidence interval (CI)] were assessed at different thresholds of predicted JIA probability.

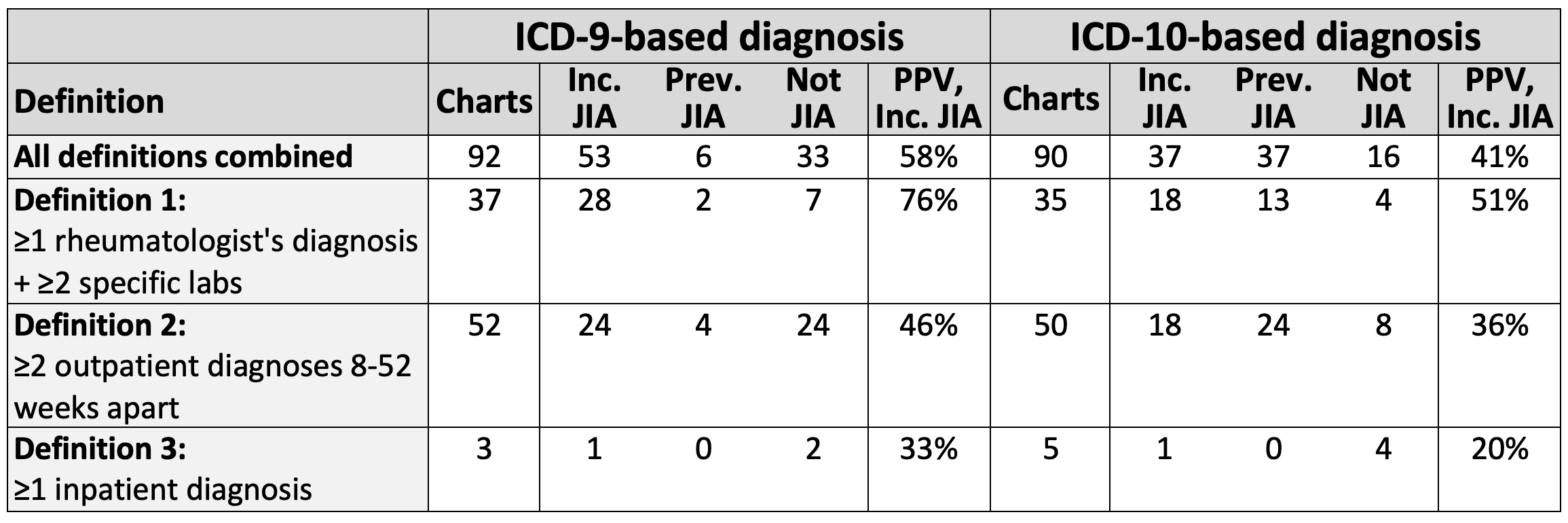

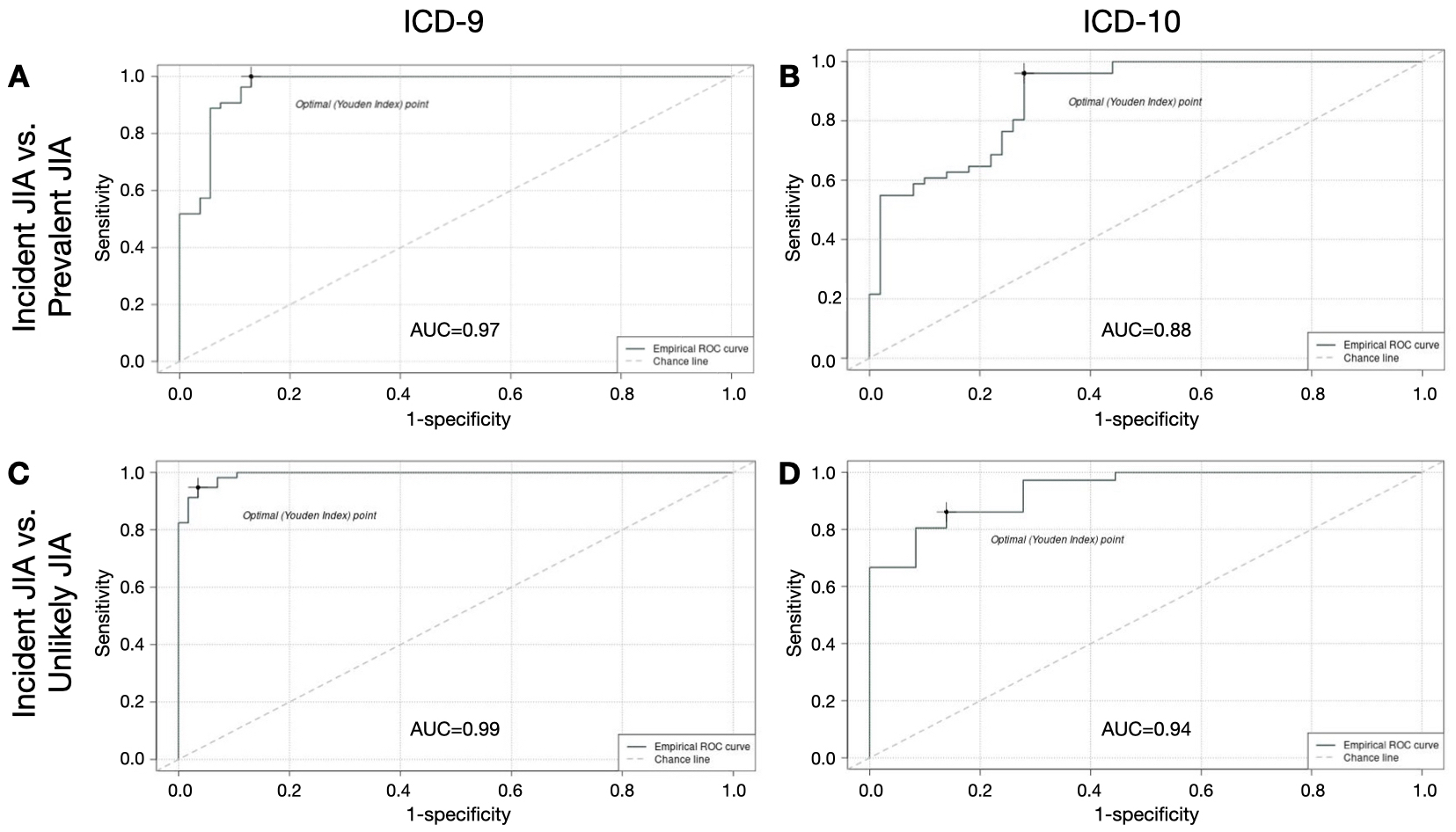

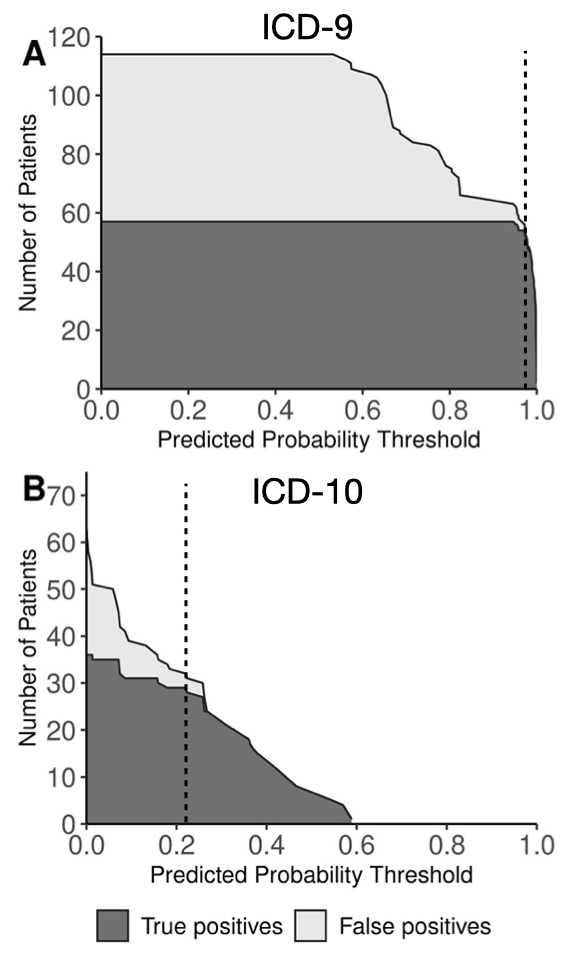

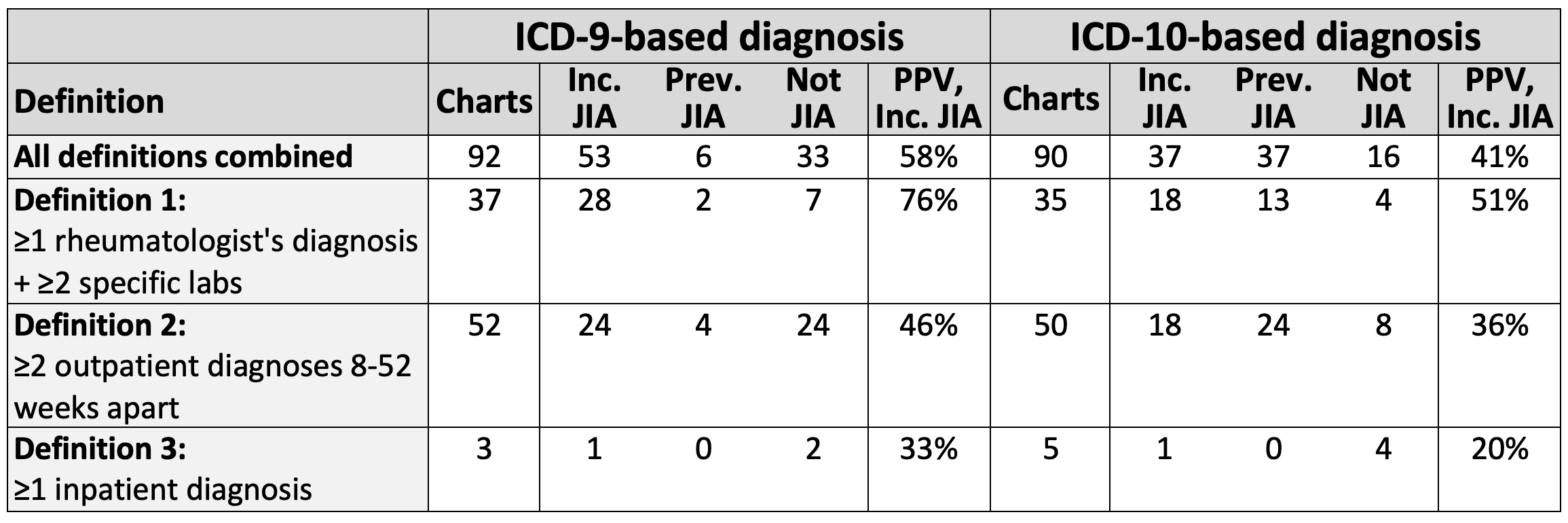

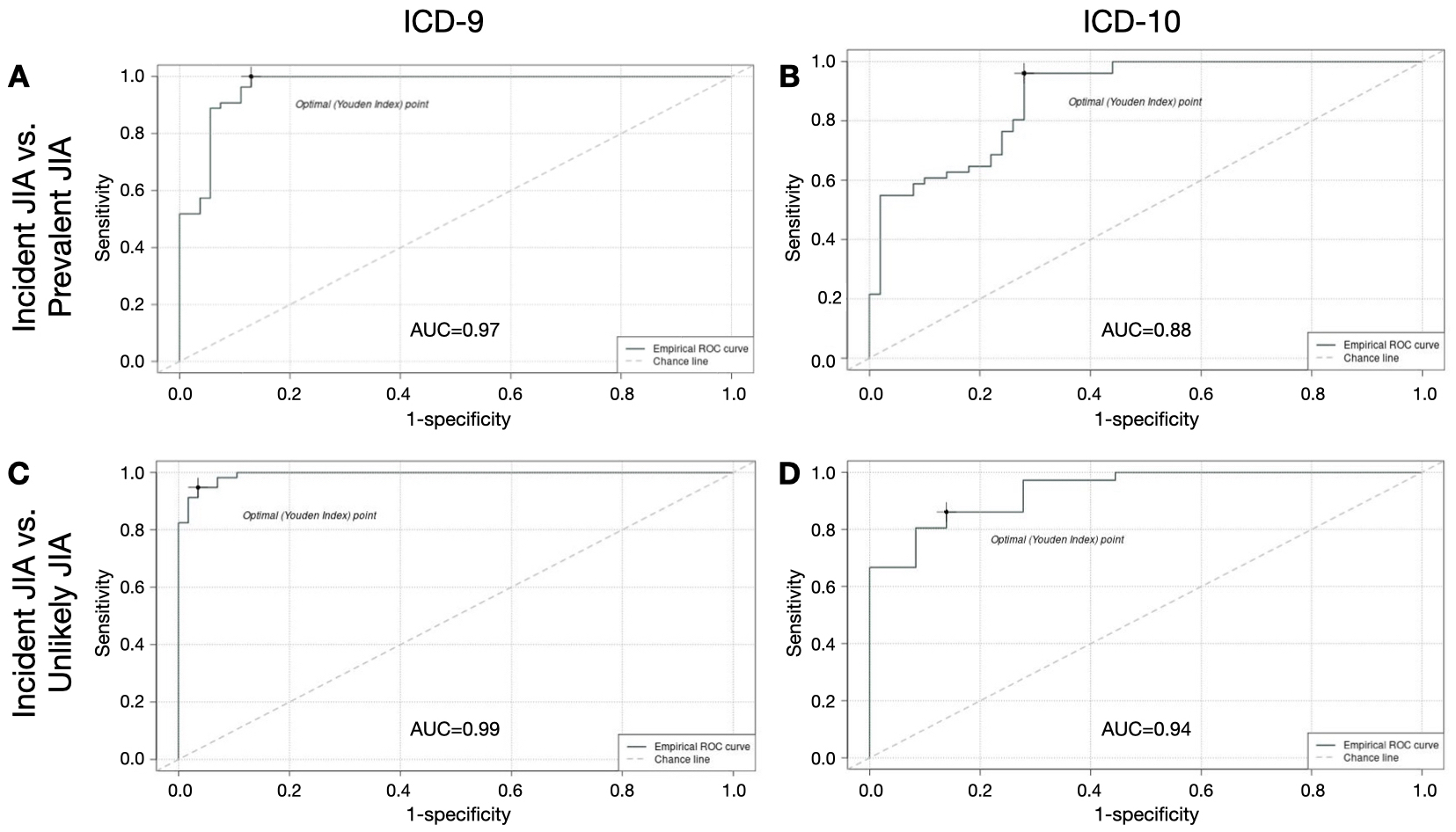

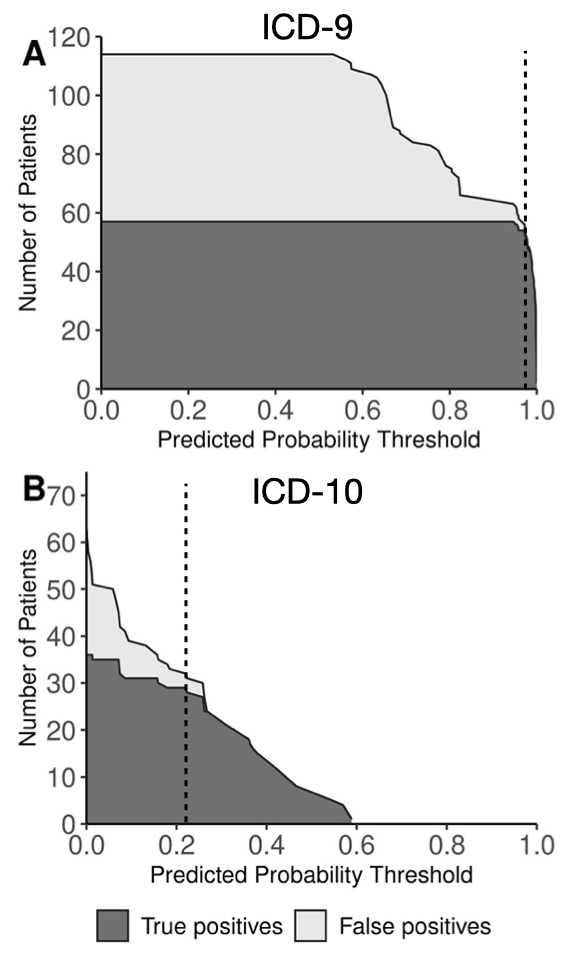

Results: Of 182 eligible charts reviewed (92 ICD-9-based, 90 ICD-10-based), 133 had definite/probable JIA (ICD-9 64%, ICD-10 82%). Of JIA diagnoses, 90 were incident (ICD-9 90%, ICD-10 50%). Rule-based algorithms had limited PPV for incident JIA (ICD-9 58%, ICD-10 41%) (Table). Use of machine-learning based algorithms enabled excellent discrimination between incident and prevalent JIA (ICD-9 AUC 0.97, ICD-10 AUC 0.88) and between incident JIA and unlikely JIA (ICD-9 AUC 0.99, ICD-10 AUC 0.94) (Figure 1). Specific predicted probability thresholds yielded excellent test characteristics for differentiating incident JIA from unlikely JIA (ICD-9: sensitivity 95%, specificity 96%, PPV 96% [95% CI 96-100%]; ICD-10: sensitivity 81%, specificity 92%, PPV 91% [95% CI 84-97%]) (Figure 2).

Conclusion: Machine learning-based diagnostic algorithms for incident JIA enhanced traditional rule-based algorithms in identifying new diagnoses of JIA using ICD-9 and ICD-10 codes within a large US claims database. External validation of these models is warranted, but these algorithms will facilitate use of administrative data to study JIA diagnosis, management, and outcomes in large populations.

P. Hoffman: None; L. Parlett: Elevance Health, 3, Sanofi, 5; D. Beachler: Elevance Health, 3; D. Reiff: None; S. McGuire: None; S. Pothraj: None; L. Moorthy: Bristol-Myers Squibb(BMS), 12, SitePI for Abatacept registry BMS.; C. Salvant: None; D. Koffman: None; S. Rege: None; C. Huang: None; M. Iozzio: None; K. Schott: None; K. Haynes: Janssen, 3; A. Davidow: None; S. Crystal: None; T. Gerhard: None; B. Strom: AbbVie/Abbott, 2, Consumer Healthcare Products Association, 2; C. Rose: None; D. Horton: Danisco USA, Inc., 5.

Background/Purpose: Administrative claims databases represent important settings for studying large populations with juvenile idiopathic arthritis (JIA), but prior efforts to validate diagnostic algorithms for JIA using administrative data have been limited to potentially non-generalizable settings (e.g., specific clinics or health care systems). We aimed to develop and validate algorithms for new diagnoses of JIA in a large claims database using rule-based and machine learning-based approaches.

Methods: We performed a cross-sectional validation study using US commercial health plan data (2013-2020). We identified children diagnosed with JIA (ICD-9-CM: 696.0, 714, 720; ICD-10-CM: L40.5, M05, M06, M08, M45) before age 18 following ≥12 months of baseline continuous enrollment without JIA diagnosis or immunosuppression. JIA diagnoses were based on 3 previously validated definitions: 1) rheumatologist's diagnosis plus orders for ≥2 specific laboratory tests; 2) ≥2 outpatient diagnoses 8-52 weeks apart; or 3) 1 inpatient diagnosis. Charts from a random subset of subjects meeting each definition were abstracted and independently adjudicated by clinical experts; discrepancies were resolved by a third expert or, where necessary, consensus. Incident JIA was defined as definite or probable JIA diagnosed in the prior 4 months. Using data from 1 year before through 1 year after first JIA diagnosis, we then created candidate predictor variables from demographics, diagnoses, medications, procedures, and specialty of clinicians diagnosing JIA. After applying a simulation-based balancing method (Synthetic Minority Oversampling Technique, SMOTE), we selected optimal logistic regression regularization hyperparameters using 10-fold cross-validation. Model variables were used to score observations, and sensitivity, specificity, and positive predictive value (PPV) [95% confidence interval (CI)] were assessed at different thresholds of predicted JIA probability.

Results: Of 182 eligible charts reviewed (92 ICD-9-based, 90 ICD-10-based), 133 had definite/probable JIA (ICD-9 64%, ICD-10 82%). Of JIA diagnoses, 90 were incident (ICD-9 90%, ICD-10 50%). Rule-based algorithms had limited PPV for incident JIA (ICD-9 58%, ICD-10 41%) (Table). Use of machine-learning based algorithms enabled excellent discrimination between incident and prevalent JIA (ICD-9 AUC 0.97, ICD-10 AUC 0.88) and between incident JIA and unlikely JIA (ICD-9 AUC 0.99, ICD-10 AUC 0.94) (Figure 1). Specific predicted probability thresholds yielded excellent test characteristics for differentiating incident JIA from unlikely JIA (ICD-9: sensitivity 95%, specificity 96%, PPV 96% [95% CI 96-100%]; ICD-10: sensitivity 81%, specificity 92%, PPV 91% [95% CI 84-97%]) (Figure 2).

Conclusion: Machine learning-based diagnostic algorithms for incident JIA enhanced traditional rule-based algorithms in identifying new diagnoses of JIA using ICD-9 and ICD-10 codes within a large US claims database. External validation of these models is warranted, but these algorithms will facilitate use of administrative data to study JIA diagnosis, management, and outcomes in large populations.

Table. Accuracy of rule-based algorithms for incident JIA. ICD, International Classification of Diseases; Inc., incident; JIA, juvenile idiopathic arthritis; PPV, positive predictive value; Prev., prevalent. Numbers of charts are shown by claims-based definition and adjudicated diagnosis. PPV represents the percentage with definite or probable incident JIA among those meeting the claims-based definition. Specific labs eligible for Definition 1 were antinuclear antibody, rheumatoid factor, anti-cyclic citrullinated protein antibody, and HLA-B27.

Figure 1. ROC curves for machine learning-based algorithms for incident JIA. AUC, area under the curve; ROC, receiver operating characteristic; ICD, International Classification of Diseases; JIA, juvenile idiopathic arthritis. ROC curves show balance of sensitivity and specificity of machine learning-based algorithms for incident JIA based on comparisons with prevalent JIA (ICD-9 in A, ICD-10 in B) and with unlikely JIA (ICD-9 in C, ICD-10 in D). The Youden Index (dot on ROC curve) represents the maximum value of (Sensitivity + Specificity – 1).

Figure 2. Impact of predicted probability thresholds from machine learning-based models on accuracy of diagnoses of incident JIA compared with unlikely JIA. CI, confidence interval; ICD, International Classification of Diseases; PPV, positive predictive value. Curves show numbers of true positive (dark grey) and false positive (light grey) cases of incident JIA at different thresholds of predicted probability from machine learning-based models comparing children with incident JIA and children unlikely to have JIA based on ICD-9 codes (A) and ICD-10 codes (B). Numbers reflect the total study population, including simulated cases generated by application of a balancing method (Synthetic Minority Oversampling Technique, SMOTE). Threshold values yielding excellent test characteristics (dotted lines) were 0.97 for ICD-9 (sensitivity 95%, specificity 96%, PPV 96% [95% CI 96-100%]) and 0.22 for ICD-10 (sensitivity 81%, specificity 92%, PPV 91% [95% CI 84-97%]).

P. Hoffman: None; L. Parlett: Elevance Health, 3, Sanofi, 5; D. Beachler: Elevance Health, 3; D. Reiff: None; S. McGuire: None; S. Pothraj: None; L. Moorthy: Bristol-Myers Squibb(BMS), 12, SitePI for Abatacept registry BMS.; C. Salvant: None; D. Koffman: None; S. Rege: None; C. Huang: None; M. Iozzio: None; K. Schott: None; K. Haynes: Janssen, 3; A. Davidow: None; S. Crystal: None; T. Gerhard: None; B. Strom: AbbVie/Abbott, 2, Consumer Healthcare Products Association, 2; C. Rose: None; D. Horton: Danisco USA, Inc., 5.